How Impression-Level Revenue Data became the single most important signal in modern mobile UA, and what happens when it breaks.

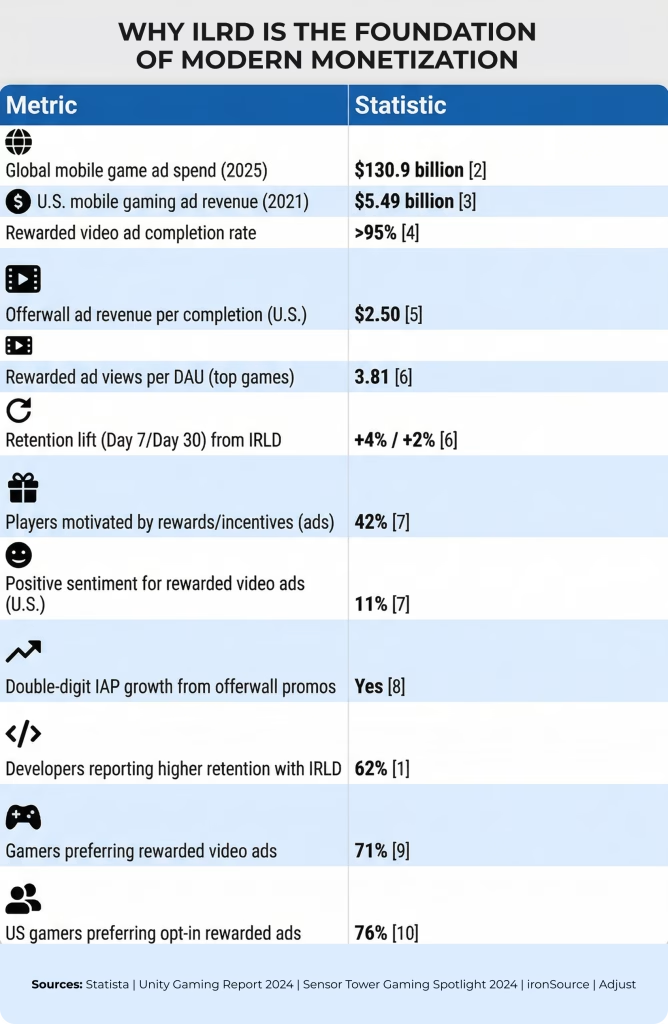

Aggregate revenue numbers don’t tell you much in automated UA. Seeing $27,000 in revenue yesterday doesn’t answer if your UA is profitable, if your ad frequency test is working, or if your cheap install channel is hurting long-term LTV. What matters is having a signal for each impression. That’s what IRLD gives you.

IRLD connects every ad impression to a user, device, and traffic source in real time. With this link, attribution is accurate, ROAS campaigns optimize on real data, and A/B tests move from guesswork to real measurement.

What IRLD Actually Does

The process is simple. When a user sees an ad, the SDK records the impression price and sends it to both your analytics pipeline (usually through an MMP like Tenjin) and the ad network running your UA campaigns.

This single event – impression ID, user ID, and revenue – connects product, monetization, and marketing. Without it, each area works with incomplete data. With it, you can track a user from the first ad impression to their last session, knowing exactly how much revenue they brought in at every step.

For hybrid monetization, where both IAA and IAP matter, IRLD is even more important. A user who never pays but watches 40 rewarded videos a day still has real LTV. Without IRLD, you miss that value.

Why We Use IRLD

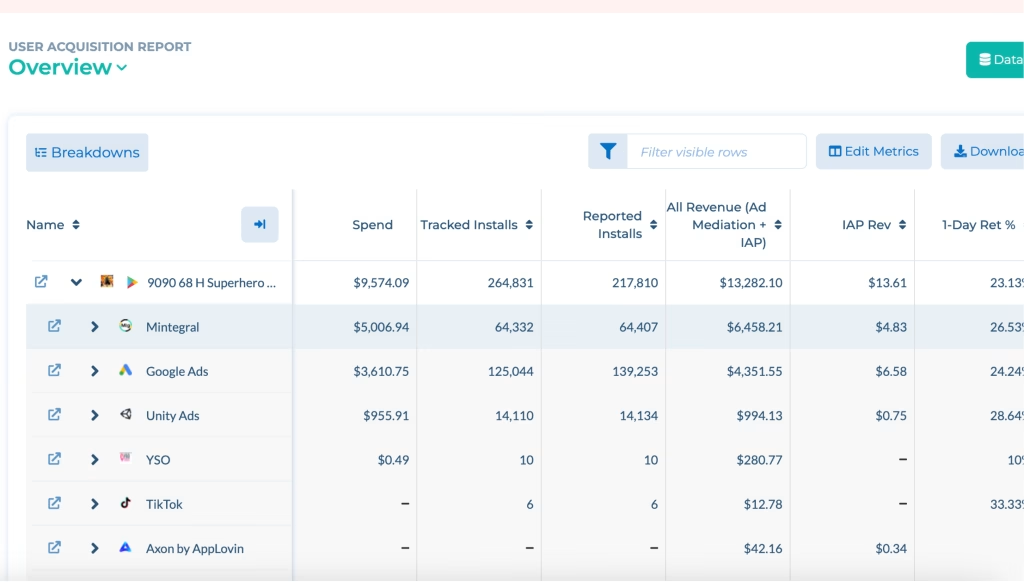

Thanks to IRLD (Incremental Revenue Line Data), we can estimate all revenue across both Ad Mediation (the process of managing ad networks) and IAP (In-App Purchases) in a unified view. This gives us a complete and accurate picture of each user’s true economic value. This comprehensive approach eliminates data silos and improves revenue forecasting.

This combined revenue signal powers a predictive purchasing model that projects ROAS (Return On Ad Spend) from Day 0 through Day 120, allowing us to make confident UA (User Acquisition) investment decisions well before a cohort (group of users joined at the same time) fully matures. As a result, teams can optimize campaigns with greater speed and precision.

Use Cases That Matter

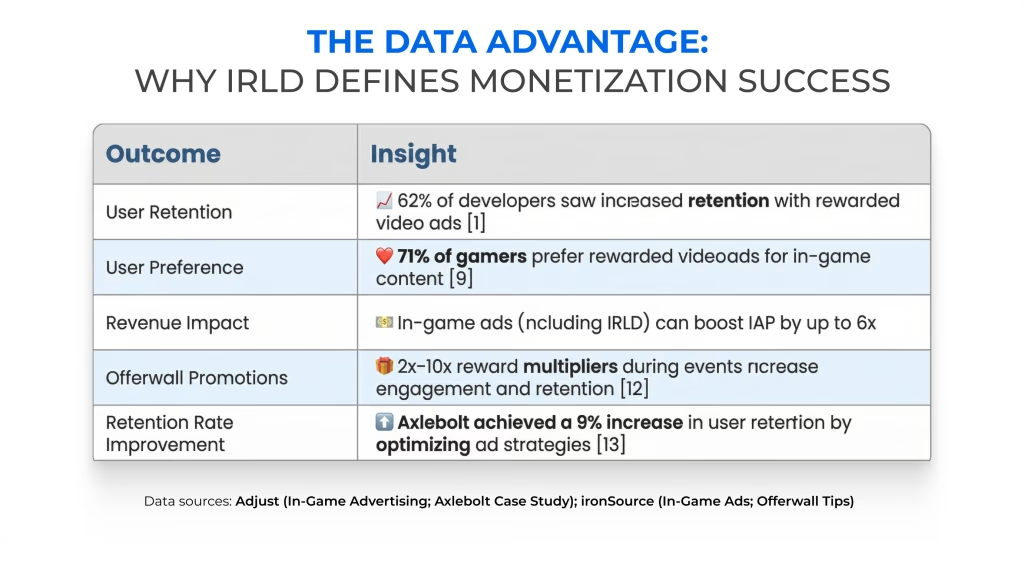

The business case for IRLD breaks down into five concrete areas, each of which is hard or impossible to do well without impression-level data.

1. Transparent LTV

We can now tell exactly how much revenue any given user, or cohort of users, has generated, factoring in both in-app purchases and ad revenue. For projects with ad-heavy monetization, this is the difference between knowing your business and guessing at it.

2. ROAS Campaign Optimization

Target ROAS campaigns work by feeding revenue signals back to the ad network’s machine learning model so it can find more users. Target ROAS campaigns use revenue signals to help ad networks find more users like your best spenders. Without IRLD, these campaigns fall back to optimizing for installs or IAP events, which are weak proxies for real revenue, especially in hybrid setups. Only impression-level data enables true revenue-based optimization. Ultimately paid out. We run these comparisons systematically. Discrepancies below 10% are expected and acceptable. Anything above that is a flag. And, as we’ll cover shortly, the cause is often a bug actively corrupting your data and your campaign performance.

4. Traffic Quality Control

We’ve eliminated channels that looked cheap at the CPI level but generated users who pWe’ve cut channels that appeared cheap on CPI but brought in users with zero ad revenue. These users aren’t cheap; they’re expensive, since acquisition costs aren’t covered. IRLD shows this clearly. If an install source brings in users with no ad LTV, we drop it. Cohort analysis is how we derive an actionable signal. IRLD enables per-user revenue attribution, which, in turn, enables cohort revenue aggregation. Both monetization tests (does adding a new network increase D7 ARPDAU?) and product tests (does this new feature affect ad LTV?) require impression-level data to be run correctly. Without it, you’re comparing groups using incomplete numbers.

How the Data Actually Gets Collected: Bidding vs. Waterfall

To see why IRLD discrepancies happen, you need to know how ad mediation works today.

The modern stack is a hybrid. We start with Real-Time Bidding: all connected bidding networks respond within half a second with a price offer, and we take the highest. Say that’s $17. We then walk down a waterfall of non-bidding (price-floor) networks, querying them from the top down ($100, $90, $50, $35, $20), but we stop as soon as we reach the bidding winner’s price. There’s no reason to go lower when we already have a $17 bid.

Bidding networks know exactly what they paid. For those, IRLD transmits the exact bid: clean, accurate, reliable.

Waterfall networks don’t share their real price. For these, IRLD sends the price floor we set (for example, $15), even if the network served an ad at $17. This gap is built in. It’s not a bug, it’s just how these networks work.

The waterfall is here to stay. Networks that rely solely on bidding usually generate 10–15% less revenue than when they also compete in the waterfall. Some GAM networks only work with price floors and never bid. Every percentage point of ARPDAU counts. We’re not leaving that revenue behind.

The practical implication: a sub-10% discrepancy between IRLD data and network payouts is normal and acceptable, largely because of this waterfall floor math. Above 10%, something is wrong.

From CPI to tROAS: The Evolution of UA Models

The shift from cost-per-install to revenue-based buying is worth tracing, because it explains exactly why IRLD is a structural requirement rather than a pleasant extra.

- CPI means paying a fixed price per install and hoping your targeting is good enough to make the math work. The risk is high. Cheap installs can end up being worthless.

- CPA (Cost Per Action): Pay for a user who completes a specific event, such as reaching level 30 or finishing the tutorial. More sophisticated, but the chosen action doesn’t always correlate cleanly with long-term LTV. An iPhone 13 user reaching level 100 might deliver $1 in lifetime revenue; the same action on an iPhone 17 Pro might deliver $4.50.

- tROAS means buying users who hit a target revenue by a set day. This is revenue-based buying and needs IRLD to work. The algorithm needs real impression-level revenue signals to find users like your best spenders.

The learning phase for tROAS is non-trivial: Day 0 optimization takes roughly a week; Day 7 takes 15–20 days; Day 30 requires running $500/day for 90 days before the model stabilizes. Not everyone can afford to go that deep. For most studios, optimizing for Day 0 and Day 7 simultaneously, which increases the volume of qualifying users compared to either metric alone, is the practical sweet spot.

To start, you need at least 10 monetization postbacks from all networks in the last 7 days, or 7,000 installs per week for hybrid campaigns. The algorithm can’t handle sudden changes. Target adjustments are limited to 10% per change, no more than twice a week. Bigger changes break the model.

One more constraint that’s easy to underestimate: creative. ROAS campaigns don’t scale without a continuous supply of fresh creatives. We regularly run dozens, sometimes hundreds, of new creatives across our top titles. Without that pipeline, the algorithms run out of signal variation and performance decays.

Discrepancies: What’s Normal, What’s Fixable, and What’s Catastrophic

Every IRLD setup has discrepancies. The key is knowing if they’re structural and acceptable, or if they’re signs of a bigger problem.

The Acceptable Causes

- Alternative stores like RuStore or sideloads cause issues. Users who install outside Google Play often don’t have MMP or Google services set up right. Ads still show and earn revenue, but IRLD events don’t fire. The revenue is real, but we can’t see it in analytics. In markets like Russia, this can push discrepancies up to 14–16%.

- Facebook: Meta prohibits passing exact impression prices to the client. Instead, IRLD receives the average eCPM for that game and country for the previous day. When any individual impression differs considerably from that average (and high-value impressions frequently do), a discrepancy is guaranteed. It’s not fixable; it’s a structural constraint of the network.

- Waterfall floor math means we might request $15, the network serves at $17, and IRLD records $15. This small difference is built in and not worth pursuing.

Fixable and Catastrophic Causes

- Build errors: The most common critical failure mode. A new app version ships with a broken or duplicated IRLD event handler. If you roll out to 20% of users and IRLD stops firing for those users, your network algorithms immediately notice a drop in traffic quality, even though nothing about the users’ actual quality has changed. Your tROAS campaigns start making worse decisions. Your A/B tests produce invalid results. The entire measurement infrastructure is corrupted until the bug is fixed and propagated. IRLD event integrity must be verified in QA before every release.

- Floor mismatch (GAM networks): Your mediation dashboard shows a $20 price floor. The network’s own settings have $5 entered – possibly by mistake, conceivably from an old configuration. Bidding completes at $12. You start walking towards the waterfall. You query at $20 (your floor). The network sees $5 (its floor), finds an ad at $6, and serves it. You receive $6 in revenue. IRLD records $20 because that’s the floor you set. The discrepancy is enormous and sends a completely false signal to every downstream system. This is why GAM network arrangements need periodic audits.

- Currency unit errors: In one of our titles, a non-standard in-game ad network (in-game advertising, operating outside standard mediation) transmitted impression prices in cents rather than dollars. The result was a 100x inflation of reported IRLD revenue. We suddenly started seeing user LTV figures that looked extraordinary, but they were fictional. The error is clear once caught, but the downstream damage to campaign optimization during the period it ran was real.

- Event duplication happens when a developer fires the IRLD event manually, even though the SDK already handles it. Every impression gets double-counted. Analytics shows double revenue, marketing sees great ROI, and budgets get scaled up. But the real economics are negative. The rule is simple: if you’re using our SDK, don’t touch IRLD event dispatch.

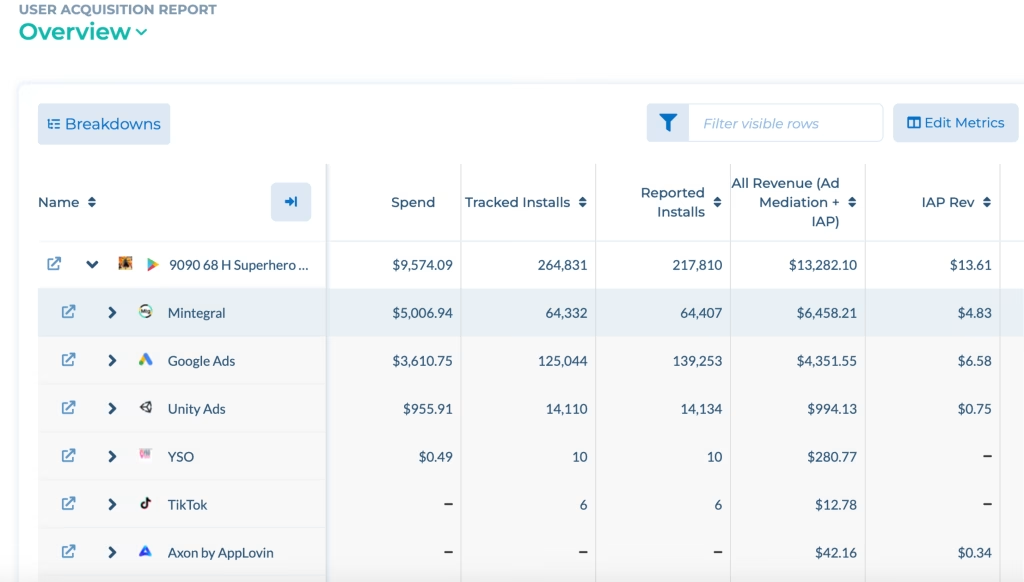

Two Real-World Cases From Our Internal Report

An abstract discussion of discrepancy types is less useful than examining what they actually produce in practice. Here are two examples from a recent internal audit.

Web Shot: Critical Inflation

The Gatsby network reported IRLD revenue of $42, while actual network payouts were $24 – a discrepancy of roughly 75%. The cause was the currency-unit error described above: prices sent in cents were interpreted as dollars, with a 100x multiplier.

The error was fixed, but some affected users remained for a while. Even 1–5% of users with 100x-inflated revenue can keep discrepancies high for weeks. Marketing saw fake LTV signals during this time. As a result, ROAS campaigns made buying decisions based on revenue that wasn’t real. Structural Noise

A 16% discrepancy – above the 10% threshold, but explainable. A significant portion of this title’s Russian user base installed it from alternative stores. Firebase and the ad analytics stack don’t initialize correctly in those environments. Revenue from ad impressions is real; IRLD events for those users don’t fire.

Compounding this: network restrictions in Russia are increasingly causing IRLD postbacks to fail to reach our servers even for users who installed from Google Play. Signal deterioration in that market is worsening. For campaigns targeting Russian users specifically, the data quality constraints need to be factored into interpretation. For campaigns targeting other markets, the impact is limited since the Russian user segment’s noise doesn’t meaningfully distort optimization for clean traffic elsewhere.

How to Activate IRLD (and What to Verify)

If you’re using our mediation, setup is minimal. For our own titles or publishing partners, IRLD is enabled by default. No extra configuration is needed. If you’re not sure, ask your monetization manager. They can check the flag and confirm events are flowing.

Once active, data flows to two destinations: your internal Data Lake via Tenjin (for attribution and cohort analytics) and directly to ad networks (TikTok, Google Ads, and others) to power ROAS campaign optimization. Both pipelines run simultaneously from the same impression event.

What to verify before every build release: IRLD events are firing correctly and not being duplicated. Before every build release, check that IRLD events fire correctly, aren’t duplicated, and use the right currency and values. Spending 30 minutes in QA is worth far more than shipping a broken build that runs for a week before anyone notices the problem. Based on marketing, cohort analysis is meaningful, and A/B testing for monetization is valid. Without it, you’re optimizing against proxies and guessing at LTV. With it, you can trace every dollar from the ad impression that triggered an install to the final session before churn.

The technology is mature and mostly automated. The failure modes are real but well understood. The main risk isn’t complexity. It’s operational discipline: checking event integrity in every build, monitoring discrepancy reports, and catching problems early.

In this market, even small ARPDAU gains take months of work, and UA efficiency decides if a project can scale. Accurate impression-level revenue data isn’t optional. It’s the base for everything else.